When enterprises evaluate AI data vendors, the discussion often centers on annotation accuracy, domain expertise, and delivery capacity. Yet many of the most consequential risks surface earlier in the lifecycle, before any annotation or modeling begins. Secure data operations, governed ingestion workflows, and controlled data handling practices are a critical but frequently underweighted dimension of AI vendor evaluation.

While enterprises often manage the collection and initial custody of physical data, the responsibility shifts to AI data partners at the point of ingestion, transformation, and operational handling. This is where secure data operations become critical, ensuring that data entering AI systems is governed, traceable, and protected from the outset.

For regulated and high-risk AI systems, data entering AI pipelines may originate as physical artifacts, digitally captured records, or hybrid datasets, including but not limited to medical records, diagnostic media, handwritten documents, and other sensitive enterprise data. How vendors receive, control, convert, and retire these assets directly affects safety, trust, and data quality. As a result, data operations governance should be assessed not as an operational detail, but as a signal of vendor maturity and governance rigor.

Why Secure Data Operations Matter in AI Workflows

Despite the growth of cloud-native AI pipelines, many enterprise AI programs still depend on physical or semi-physical data sources. These inputs, whether originating as physical artifacts, digitally captured records, or hybrid datasets, often contain sensitive clinical, financial, or personal information that carries strict regulatory and contractual obligations.

From an assessment point of view, inadequate controls during data intake, transfer, or operational handling constitute an upstream risk that cannot be fully offset by downstream digital security. When the chain of custody is breached or confidential materials are mishandled, it becomes very challenging to regain trust in dataset integrity and, consequently, in model outcomes. Vendors who maintain disciplined data operations and governance workflows are a good indication that they are prepared for AI work that is regulated and safety-critical. Those who are not may create a level of uncertainty that can only be revealed through audits or deployment reviews.

Why Data Operations Belong in AI Vendor Evaluation

Data operations have a direct correlation with the criteria that companies use to assess AI data vendors in general, i.e., safety, trust, and quality. Poor handling of data during intake, transfer, access management, or conversion workflows will weaken downstream annotation and governance controls, even if they are otherwise strong.

On the other hand, well-organized data operations systems demonstrate the level of maturity of an organization, its readiness for governance, and its capability to support AI initiatives that involve high risks. By using data operations governance as a measure to evaluate vendors instead of taking it for granted, companies can uncover risks at an early stage and thus have a better chance of making the right vendor choices.

Data Operations Risks That Reveal Vendor Weakness

Across AI programs, data operations span intake, transfer, storage, access, processing, and lifecycle management. Breakdowns between incoming data sources and operational systems reveal risk categories that are significantly different from those of digital-only workflows. However, weaknesses at this stage often mirror broader gaps that later surface in downstream AI operations as well.

Such risks are not readily visible in surface-level vendor assessments:

- Loss or unauthorized access during transit or intake

- Gaps in chain-of-custody documentation

- Poor segregation of regulated or sensitive materials

- Uncontrolled duplication during scanning or conversion

- Undefined retention, return, or disposal practices

When viewed in the context of end-to-end data operations, these issues frequently correlate with parallel risks in digital environments, such as excessive access privileges, unclear ownership, insufficient audit trails, or inconsistent retention enforcement. These problems should not be seen as isolated operational failures from the point of view of vendor evaluation. Instead, they indicate weaker governance, accountability, and quality control, which are the main components of enterprise trust.

Secure Intake as an Indicator of Governance Maturity

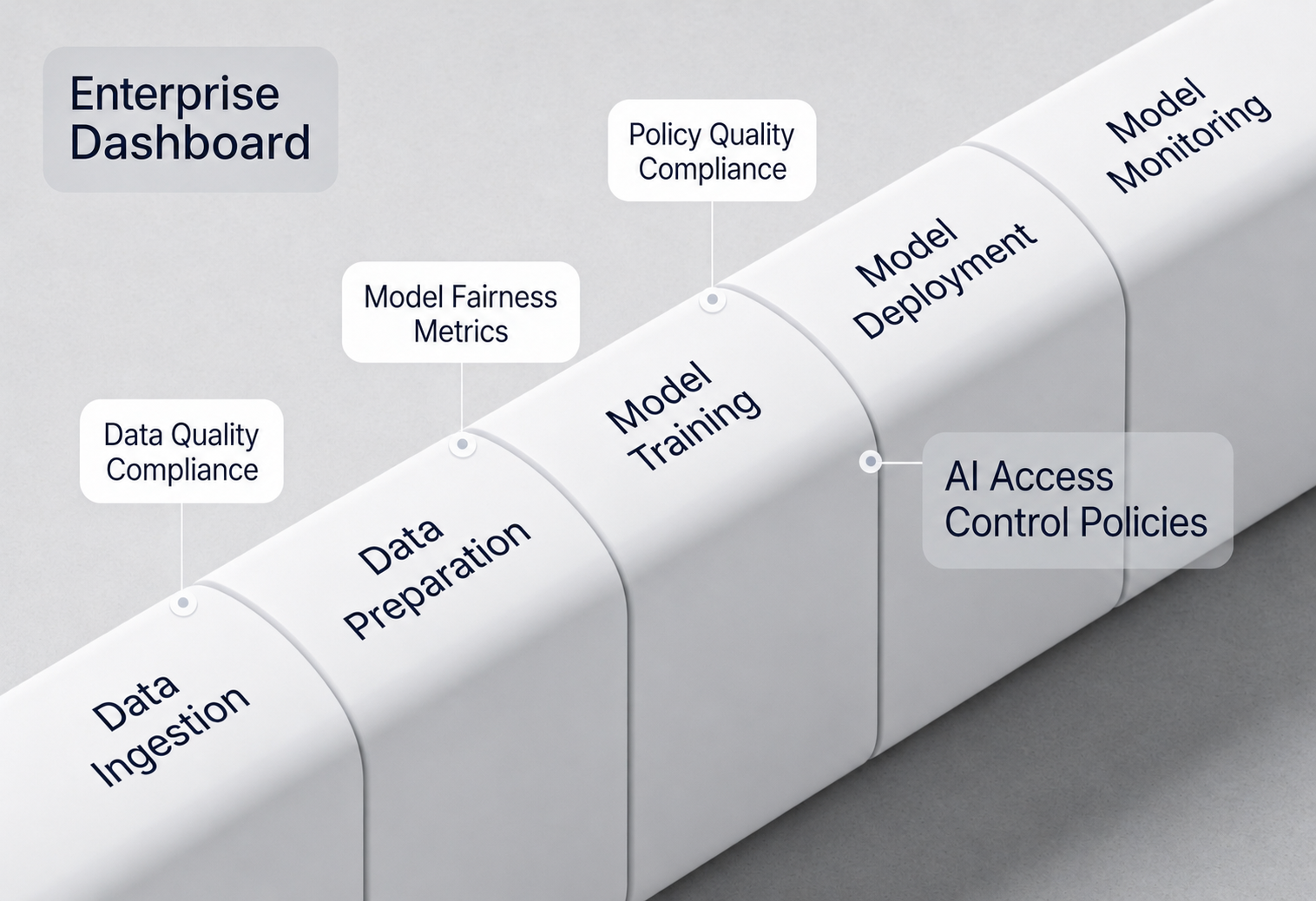

Secure intake begins when data is transferred from client-controlled environments into governed data operations systems. This stage reflects how effectively a vendor manages ingestion, validation, access control, and traceability once data enters their operational workflows.

Mature data operations systems establish provenance as soon as data is ingested. Incoming datasets are logged immediately upon receipt, assigned identifiers, access-controlled, and tracked throughout operational workflows. In this way, it results in a verifiable trail that aligns with audit readiness and regulatory defensibility.

When evaluating AI data vendors, enterprises should examine whether intake processes are formalized, repeatable, and documented, or if there is an ad hoc approach that cannot be independently validated.

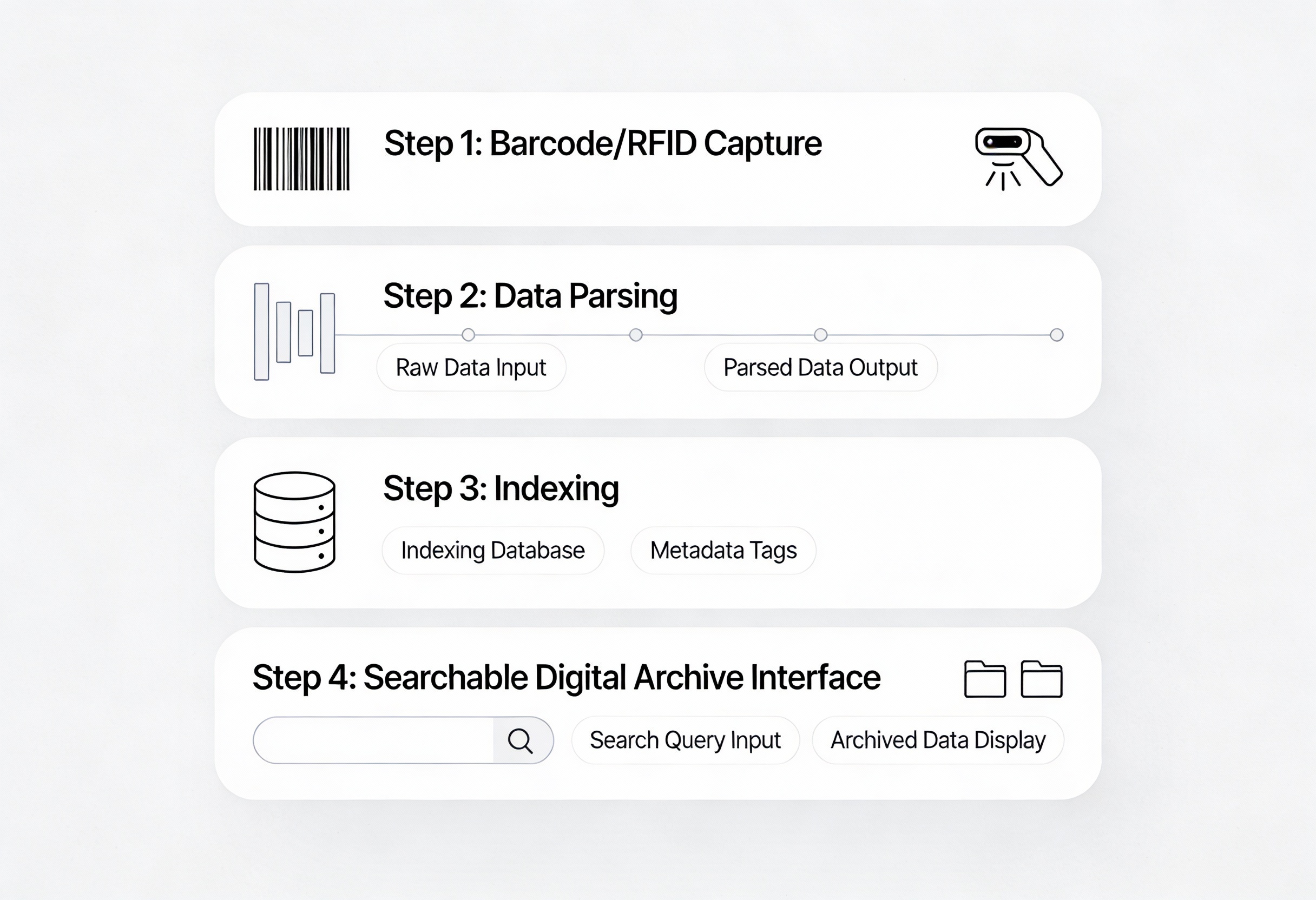

Controlled Conversion Environments and Data Integrity

Physical-to-digital conversion is a critical boundary in the AI data lifecycle. From an evaluation perspective, it represents a transition point where data integrity can either be preserved or irreversibly compromised.

Beyond procedural controls, technology plays a central role in governing this transition. Vendors with mature data operations rely on purpose-built systems that enforce access controls, role separation, and detailed activity logging by design, rather than through manual oversight. Scanning, indexing, validation, and integrity checks are embedded into conversion platforms, ensuring least-privilege enforcement, traceability, and consistency across workflows.

Enterprises should assess whether these controls are enforced systematically through technology rather than relying on procedural or manual oversight. Enterprises should view these controls as quality safeguards, not operational overhead. Well-architected conversion technologies ensure that digitized datasets faithfully represent their source data and prevent untracked manipulation during ingestion or transformation.

Secure Tooling, Infrastructure, and Controlled AI Workflows

Enterprise AI programs depend not only on governed human workflows, but also on the security and architecture of the systems through which data moves. Vendors should demonstrate that their tooling, infrastructure, and integration environments support secure, auditable, and compliant AI operations across cloud, on-premises, and hybrid deployments.

Mature data operations environments enforce governance through technology, not only through policy. This includes role-based access controls, environment segregation, encrypted data transfer, centralized logging, and infrastructure-level monitoring across annotation, review, storage, and ML operations workflows.

Enterprises should also evaluate how vendors integrate with client systems and manage controlled data movement between environments. Secure APIs, governed ingestion pipelines, isolated workspaces, and audit-ready integrations help maintain traceability across the AI lifecycle while reducing operational risk.

For regulated AI programs, infrastructure readiness is equally important. Vendors with established security certifications, documented compliance processes, and controlled operational environments provide stronger assurance that sensitive enterprise data can be handled consistently and securely at scale. This increasingly aligns with broader enterprise frameworks for evaluating AI vendors across safety, trust, and quality dimensions.

Human Expertise Without Unmanaged Exposure

Human involvement is unavoidable in enterprise data workflows, especially in regulated domains. The evaluation question is not whether humans are involved, but how that involvement is governed.

Strong vendors pair expert-led workflows with enforceable operational safeguards. Access is role-based, environments are monitored, and responsibilities are clearly defined and auditable. They also invest in structured training to ensure individuals understand security obligations, data handling procedures, and regulatory requirements before working with sensitive data.

From an evaluation perspective, enterprises should examine how vendors prepare and assess people for these roles. This includes role-specific onboarding, ongoing training, supervision, and periodic validation of compliance and data-quality performance. This approach allows human judgment to improve data quality while containing risk.

From an evaluation lens, vendors that rely on informal trust rather than structured controls, including formal training and assessment, expose enterprises to hidden compliance and security vulnerabilities.

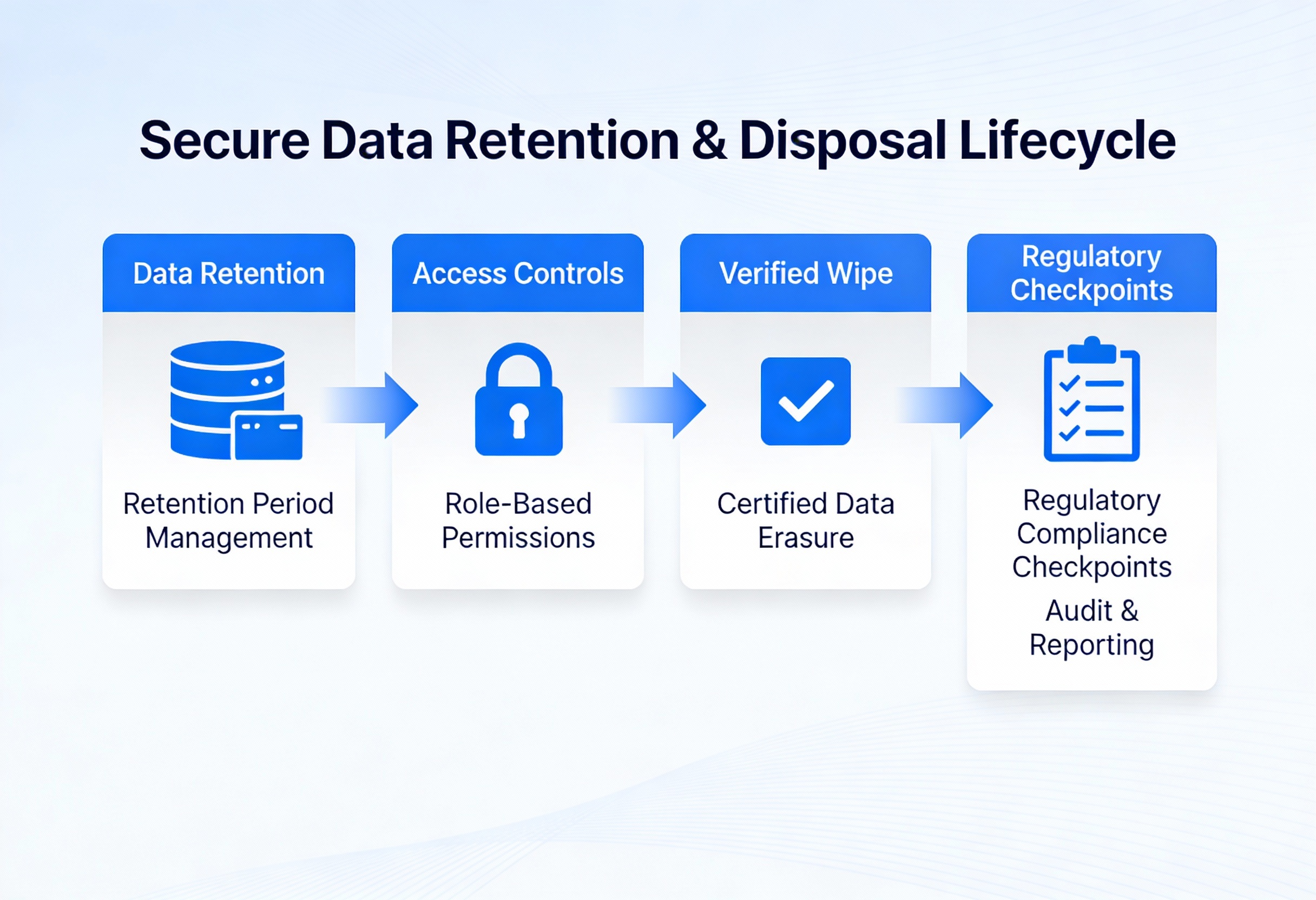

Retention, Return, and Secure Disposal as Trust Signals

The way end-of-lifecycle handling is managed tells a lot about the maturity of a vendor’s data operations model; however, it is also one of the most frequently overlooked aspects of vendor selection. Established vendors have well-defined policies for:

- Data retention that complies with regulatory and contractual requirements

- Secure storage during retention periods

- Verified return or archival processes

- Secure documented disposal, along with audit evidence

For enterprises, having clarity at this point is a trust signal. Vendors who are unable to explain how data is removed from their operational environment represent a long-term governance and compliance risk that extends beyond the active project lifecycle.

Reducing Enterprise AI Risk Before Modeling Begins

As AI systems move closer to real-world decision-making, enterprises can no longer afford blind spots in their data foundations. While operational governance may be less visible than model metrics, its impact on compliance, auditability, and trust is nevertheless significant.

Understanding how vendors manage secure data operations, from intake and transfer to governed processing and secure disposal, enables organizations to reduce foundational risk even before AI development begins. This governance-first approach not only reduces exposure to security and compliance failures but also supports the safe deployment of AI systems that can meet regulatory and operational requirements.

iMerit’s data operations model focuses on secure ingestion, governed workflows, controlled data transfer, and human-led quality processes that ensure enterprise AI programs meet safety, trust, and compliance expectations.